The 'Obvious Thing' Nobody Noticed

The hazard was visible. The instructor just didn't have the capacity to see it. Part 2 of 2

Eighteen-year-old Linnea Mills was taking an Advanced Open Water course. She was new to drysuit diving. Her instructor was relatively inexperienced. Time was pressing. And there was a problem that neither of them fully understood the implications of: Linnea's new drysuit valve didn’t match the inflator hose on the BCD. That meant the drysuit couldn't be connected to her cylinder gas. There was more. She was also overweighted with 45 pounds of lead in a BCD with a 25-pound lift capacity, and no way to add gas to the suit for buoyancy compensation.

To an experienced eye reviewing the case afterwards, the hazard is obvious. A student in cold water, overweighted, unable to inflate her suit, with a BCD that couldn't generate enough lift to compensate. But in the moment with an inexperienced instructor managing a course, the limited daylight, the cold water, the unfamiliar gear, the commercial and social pressures to get the dive done, that configuration wasn't recognised for what it was.

Linnea died. The system failed her.

Not a single person, in a single moment, making a single bad decision. A system that had been drifting; quietly, incrementally, in ways that felt locally reasonable. Until the margin collapsed entirely. Safety science research sees this on numerous occasions, so it isn't a diving 'thing', it is a human in the system 'thing'.

Understanding how that happens, and what determines whether an instructor has the cognitive architecture to catch it before it becomes fatal, is what this blog is about, and follows up from Part 1 which explained some of the key concepts.

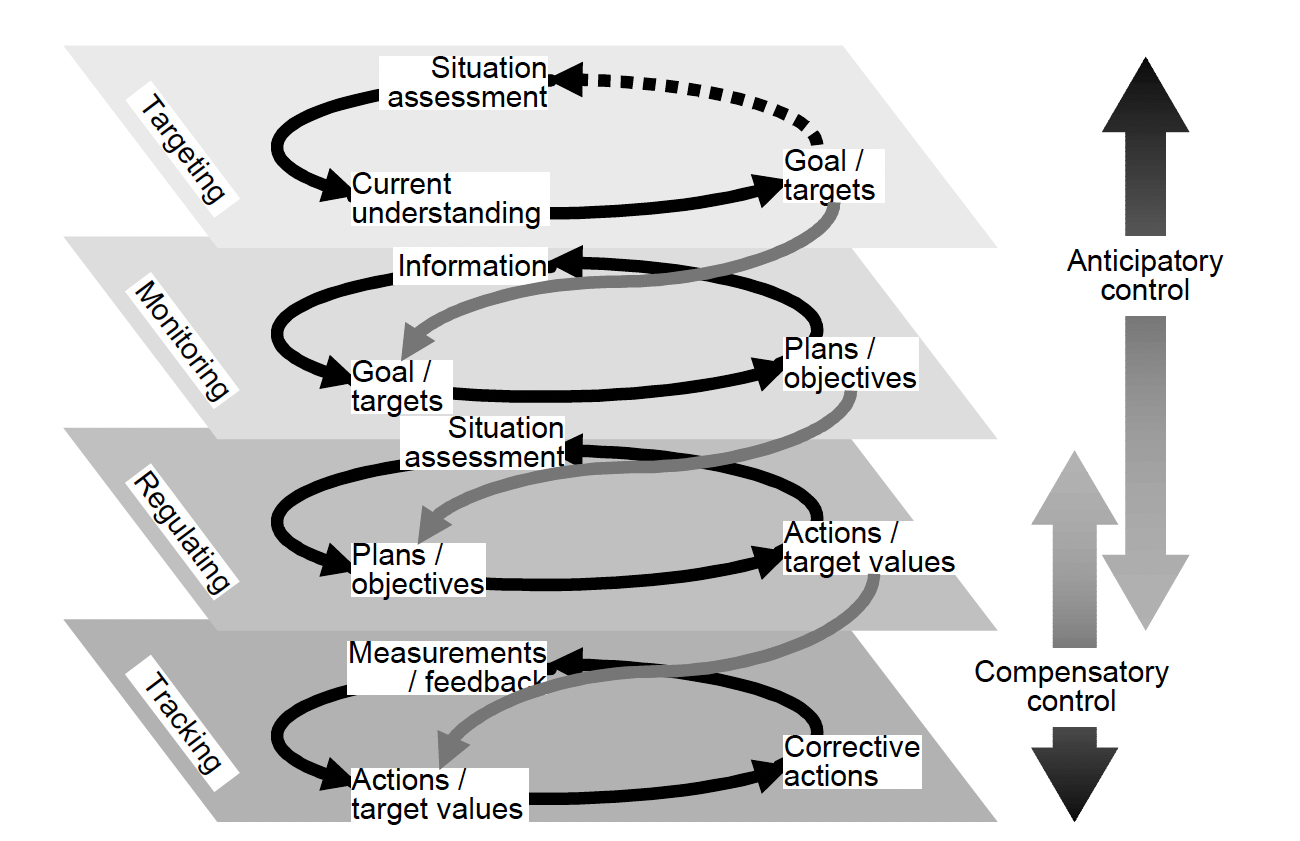

COCOM - Layers of Control within the 'System'

ECOM - Layers of Task Fidelity

ECOM from Hollnagel & Woods (Joint Cognitive Systems, 2005, Chapter 7)

Why the instructor didn't see it

It is tempting, when reading a case like Linnea's, to locate the failure at the point of entry into the task at hand. On the lakeside. This is when the instructor should have joined the dots and called the dive: incompatible hose and drysuit valve >> no drysuit buoyancy, plus the lead she carried was beyond the lift capability of the BCD.

And that moment did exist.

But examining why it was missed requires something more than noting that the check should have happened. It requires understanding what was available to the instructor in that moment to run such a check on.

Part 1 covered the Extended Control Model (ECOM). This model describes control as operating simultaneously on four layers.

The tracking layer handles immediate physical tasks: managing equipment, organising the dive, dealing with the unfamiliar gear in front of them.

The regulating layer manages the short-term flow of the course: getting students ready, sequencing the skills, keeping the session moving.

The monitoring layer watches the whole system against expectations: is anything here outside normal parameters? Is there something about this configuration that doesn't fit?

The targeting layer sets and revises overarching goals: is this dive still appropriate to proceed with, or does something need to fundamentally change?

What the Linnea Mills case illustrates is a monitoring layer that was overwhelmed and a targeting layer that never had the resource to engage. The instructor was managing multiple competing demands: an unfamiliar student, new equipment, time pressure from the end of the daylight window, the commercial expectation of completing the course. Each of those demands sat at the tracking and regulating layers. They consumed bandwidth. The monitoring layer, the one that would have asked "does this configuration actually work?", was not running with any capacity or bandwidth to spare.

This is not a description of negligence. It is a description of a cognitive system operating under conditions that systematically stripped it of the capacity to catch what it needed to catch. Capacity takes time and effort to develop, so that we reduce the amount of cognitive overhead needed to execute a task because it becomes ‘automatic’.

Driving and diving. Not a spelling mistake, but a relevant metaphor for diving instruction

Think about driving in a city you know well. The route is automatic. Your attention is largely free, so you notice things: the cyclist pulling out, the pedestrian near the kerb, the junction that looks different from last week. Now put yourself on an unfamiliar road in an unfamiliar country, with the steering wheel on the wrong side, in a car with different controls. Suddenly, driving consumes nearly everything. You miss the cyclist. You don't notice the junction. You are not distracted; you are overloaded. The monitoring process that usually runs quietly in the background has been displaced by the demands of the basic task.

Teaching is the unfamiliar road. Your own diving is the familiar route.

An instructor who still needs conscious effort to manage their own buoyancy, depth, and position in the water does not have much left over for the class environment. But even an instructor with solid personal diving skills faces a new version of this problem in a teaching context: the demands of instruction itself, student management, skill sequencing, group positioning, equipment oversight, are a new set of roads they don't yet know automatically.

For the experienced instructor, supervising students has become familiar enough that it runs as a background scan, flagging anomalies without demanding full deliberate attention. For the less experienced instructor, every student interaction, every equipment question, every piece of unfamiliar gear is a conscious act.

Linnea's instructor was on unfamiliar roads in multiple directions simultaneously: a new student, a new type of equipment, time pressure they weren't accustomed to managing, an emergency plan that hadn't been built. The tracking and regulating layers consumed everything they had. The monitoring layer had nothing left to run on. Furthermore, the wider certification and quality management systems that placed her in that environment without either her or the system understanding her performance contributed to the failure.

The systemic pressure the instructor was inside

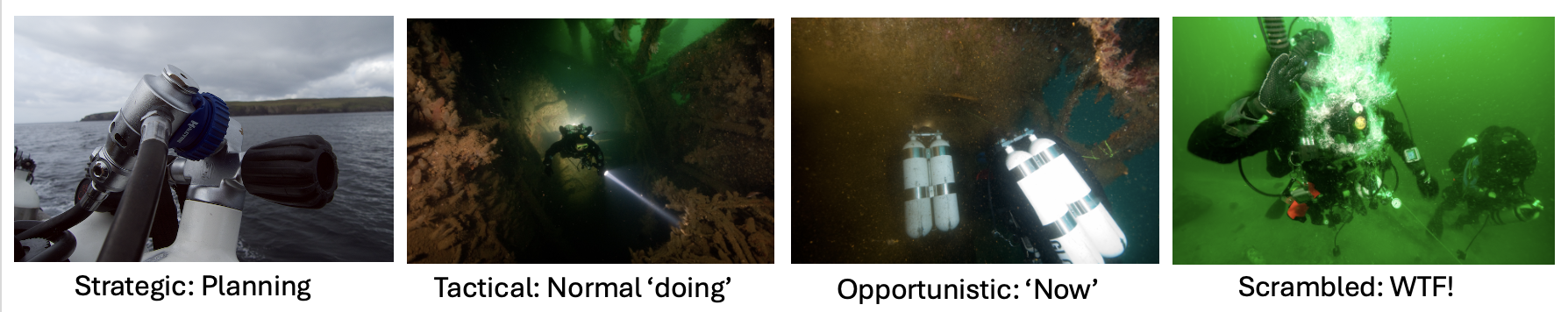

What makes the Linnea Mills case instructive rather than merely tragic is the way it illustrates what the Contextual Control Model (COCOM) calls the difference between strategic and opportunistic control, and, specifically, what determines which mode an instructor arrives at the water's edge in.

In strategic control, you have bandwidth and time to hold multiple goals, think in terms of how the situation is likely to develop, and make decisions grounded in a clear mental model of what could go wrong. Strategic mode is where the experienced instructor operates before entry: thinking through not just the lesson plan but the contingency structure.

Which aspects of this student's configuration have I actually verified?

What is my abort criterion?

What happens if I need to assist a student at depth?

Do I have a credible dive plan?

Do I have a credible emergency action plan? How do I know?

These are targeting-layer questions. When they are answered before entry, they generate pre-set thresholds and criteria that remain active even when the monitoring layer goes thin. They don't require creative thinking under pressure to fire, they fire because they were loaded in advance.

Linnea's instructor was not in strategic mode before entry. The accumulation of pressures, inexperience, unfamiliar equipment, time pressure, commercial expectation, all meant the instructor was already in opportunistic control before the dive began. Reacting to the most pressing demand in each moment. The incompatible inflator hose didn't register as a hazard, not because the instructor didn't care, but because the monitoring process that would have flagged it wasn't running with sufficient resource.

This is what "practical drift" looks like at the level of individual cognitive control. The gap between how the course should have been run and how it was run wasn't born of wilful corner-cutting. It grew from the normal human tendency to adapt to the demands of the immediate, and the gradual, unnoticed narrowing of the picture that follows when those demands are too high.

What the monitoring layer needs to run

There is a specific structural problem here that instructor development programmes rarely address directly: the quality of group monitoring available to an instructor is not fixed by their certification level. It is a function of how much attentional bandwidth their tracking and regulating layers consume in any given set of conditions, with any given student or group.

This has practical implications. An instructor running their first drysuit student has never run that pattern before. It is unfamiliar, demanding, and sits at the tracking and regulating layers. Their monitoring layer, the one that watches the whole picture, is thinner than it would be with a more familiar configuration. That doesn't mean the dive shouldn't happen. It means the instructor needs to recognise that their monitoring bandwidth is reduced, and compensate: a more conservative plan, a more explicit pre-set list of what they are specifically looking for, more deliberate time on the surface to run the checks that the monitoring layer would normally run automatically.

The experienced instructor in the same scenario does something structurally different before anyone enters the water. Their targeting layer is working during the briefing, not just "here is the plan" but "here is what I am specifically checking for, here is my abort criterion, here is what this configuration needs to be capable of before we enter." Those questions generate thresholds. Those thresholds remain active even when everything else is demanding attention. When student and instructor reach the waterline, the experienced instructor has loaded the monitoring layer in advance with specific criteria to trigger decisions. The less experienced instructor often hasn't, not from carelessness, but because they haven't yet learned to recognise that this is the work that needs to be done, and no one has taught them in a manner which means the knowledge is ‘sticky’ and easily retrieved.

Fundamentally, this is what the real difference between a competent diver and a competent instructor is. It is not additional technical knowledge. It is the ability to run a continuous, resourced monitoring process across the whole group, and to structure pre-dive preparation in a way that keeps that process running even under the load that teaching inevitably places on the lower layers.

What Linnea's case should change about how we think about instructor failure

When something like this happens, the usual response is to locate the failure in the individual; the instructor's inexperience, the missed check, or the inadequate emergency plan. Those things were real. But framing the problem that way misses the most important question: what were the conditions that produced an instructor whose monitoring layer had no resource to catch what needed catching?

The inexperience was real, but inexperience alone doesn't determine cognitive architecture. What determines it is the load the situation placed on the tracking and regulating layers relative to the capacity available. Time pressure, unfamiliar equipment, commercial expectation, inadequate preparation; each of those factors consumed bandwidth that the monitoring layer needed. Remove any one of them and the picture might have been different. Remove several and an experienced, well-supported instructor would have had the capacity to flag the configuration before entry.

The system set up the conditions in which this outcome was possible. The instructor was inside those conditions, not above them. That is not an excuse for the outcome. It is the explanation that actually points somewhere useful; toward the changes in training design, course scheduling, equipment verification, and mentorship that would reduce the probability of the same conditions arising again.

The limits of cognitive capacity described also apply to dive centre managers, diving safety officers on scientific diving operations, and leaders of expeditions. Off the top of my head, I can think of numerous examples where capacity was ‘lost’ because the lower levels were ‘full’ and there was no capacity to explore the bigger picture of ending the dive.

This brings us back to the point about ‘anyone can thumb a dive at any time for any reason’. Often this discussion is based around the lack of psychological safety, but there is also a competence and cognitive bandwidth issue. The divers involved might be so consumed with ‘fighting fires’ that ending the dive doesn’t even cross their mind.

Bringing it back to Part 1

Part 1 of this series followed James on the M2; a diver whose monitoring layer went quiet while he was absorbed in a photograph, and who surfaced far downstream of his buddy as a result. The mechanism was the same as Linnea's case: the lower ECOM layers consumed the resource the monitoring layer needed, and the broader picture went dark.

The difference is scale and consequence. James's outcome was a frightening dive and a valuable debrief. Linnea's was a fatality.

In both cases, the question that matters most is not "what did this person fail to do?" It is "what were the conditions that left the monitoring layer without resource at the moment it was most needed?" That question is answerable.

The answers shape how instructors prepare, how courses are designed, how equipment checks are structured, and how we support less experienced instructors to recognise that the pre-dive targeting-layer work is not procedural box-ticking, it is the cognitive act that determines whether they will have the capacity to catch what matters once they are in the water.

The monitoring layer can't catch what it hasn't got the resource to look for. Building that resource, and understanding what erodes it, is the work that needs to be done to help divers and instructors be better than yesterday.