The Shortcut That Gets You Home — and the One That Doesn't

Every diver makes dozens of decisions before they even enter the water. Is this site safe today? Is my gas plan adequate? Should we turn the dive now or push a little further? Most of these decisions don't feel like decisions at all, rather they feel like obvious conclusions, arrived at quickly and with confidence. That speed and confidence is the product of heuristics, and understanding them is one of the most practically useful things a diver can do for their own safety.

What Heuristics Actually Are

A heuristic is a mental shortcut - a rule of thumb that allows you to reach a workable decision quickly, using limited information, under time pressure. Kahneman and Tversky's foundational work on judgment under uncertainty established what most people think of when they hear the term: fast, intuitive processes (what Kahneman later called System 1 thinking) that operate below conscious awareness and rely on cues like familiarity, salience, and pattern recognition. When you glance at a dive site and assess conditions in seconds, you're using heuristics. When you check your buddy's kit and feel confident without consciously working through a mental checklist, you're using heuristics. When you interact with an instructor who appears confident and knowledgeable, you're using heuristics.

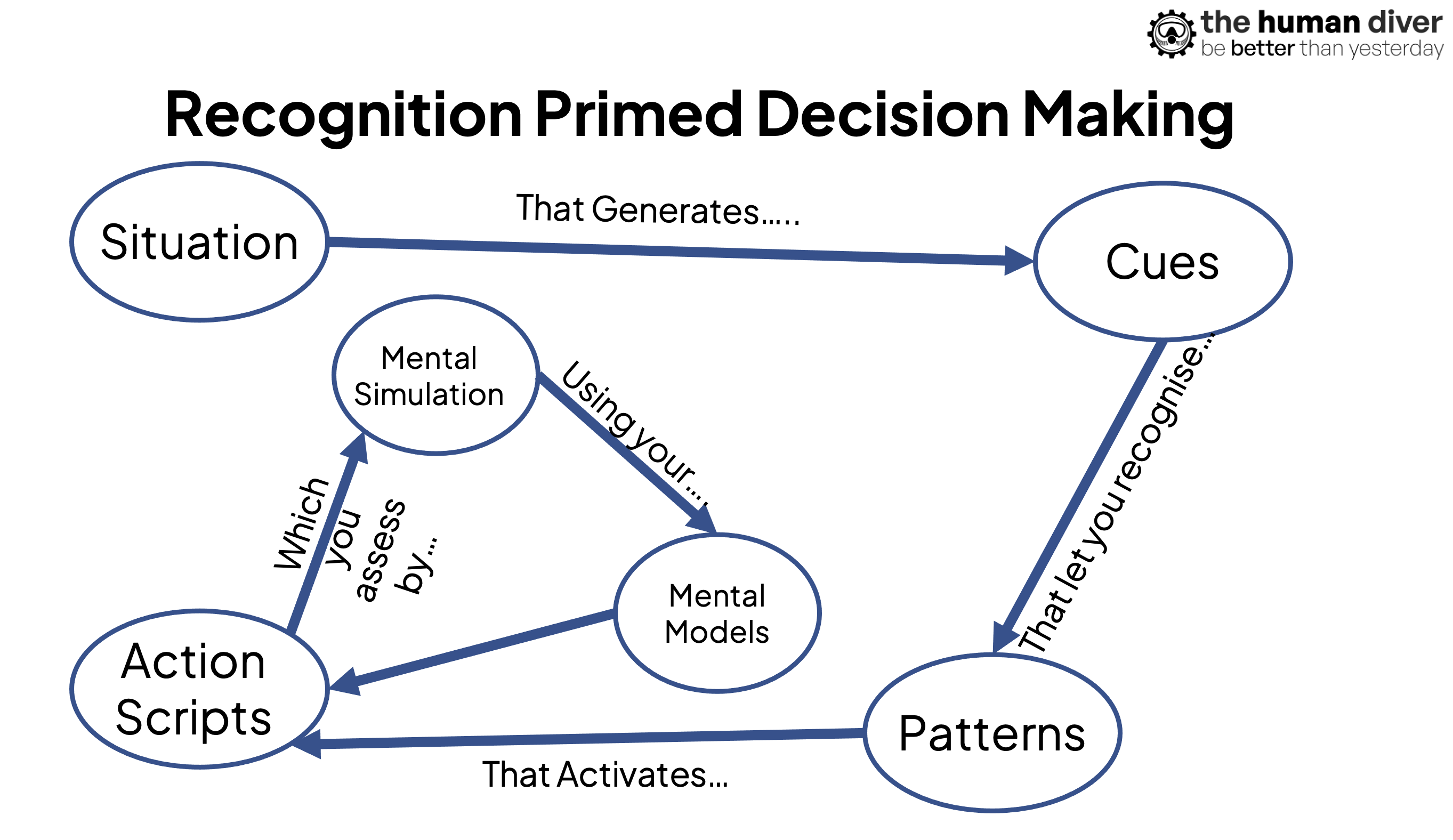

Gary Klein's research on naturalistic decision-making added important texture to this picture. Working with firefighters, military commanders, and other high-stakes practitioners, Klein found that experienced professionals don't typically deliberate between options, instead, what they do is recognise a situation as familiar, generate a plausible course of action, and mentally simulate it. When it feels right, they act. This recognition-primed decision process is deeply heuristic in nature, but Klein's point was that in environments with valid, learnable cues and substantial experience, these shortcuts are not primarily error sources. They are powerful expert tools. A classic example is Sully and the ditching of Cactus 1549 on the Hudson River - this emergency was outside the scope of the normal training but experience helped develop a course of action which was 'satisficing' - it was good enough.

Gigerenzer's "adaptive toolbox" framework sits alongside both: heuristics, in his view, are ecologically rational, and they work well when matched to the right environment; the goal isn't to eliminate them but to understand when they fit and when they don't. Gigerenzer strongly advocates for being 'risk savvy' - understanding the strengths and weaknesses of how we make decisions in uncertainty.

So heuristics are processes. They are the strategies your brain uses when time is short, information is incomplete, and deliberate analysis would take too long or demand too much cognitive resource. In diving, as in surgery, construction, and emergency medicine, they are not optional extras, they are the primary mode of operational sense-making and decision-making.

Where Biases Come From

Biases are what happen when heuristics misfire. They are the systematic errors that emerge when a mental shortcut is applied in a context it wasn't suited for, when salient cues are misleading, or when the environment doesn't provide the feedback necessary for good intuitive calibration. The distinction is important because it reframes the problem: the issue isn't that you use mental shortcuts, it's that the shortcuts sometimes generate predictable, recurring errors that you don't notice because the shortcut feels just as confident when it's wrong as when it's right. It is only afterwards that we can see the decision was 'flawed'. The recent blog about entering the Scylla wreck without lining in leading to the deaths of two divers is an example of this.

Research across medicine, surgery, construction safety, and cybersecurity consistently identifies the same small cluster of biases causing outsized problems.

Availability bias leads people to weight recent, vivid, or easily recalled experiences more heavily than base rates or objective data. If your last ten dives at a particular site went smoothly, availability pulls you toward assessing conditions as manageable today, even when the current data suggests otherwise.

Representativeness bias causes you to categorise a new situation based on how closely it resembles a familiar pattern — "this looks like last time" — which can blind you to subtle but important differences in gas consumption, decompression profile, or environmental conditions.

Anchoring causes you to fix on an initial data point and fail to sufficiently adjust as new information arrives: if you planned a 45-minute bottom time, you may resist revising that even as circumstances change.

Overconfidence and the illusion of control are particularly well-documented in surgical and construction safety research, and experienced divers are at least as susceptible — possibly more so, because experience in a domain genuinely does improve some judgments while leaving others stubbornly uncalibrated.

Confirmation bias sits underneath many of these: once you've formed an assessment, you tend to seek information that confirms it and discount information that challenges it. In a pre-dive briefing, in a buddy check, in the moment you're reading your computer at depth, you are more likely to notice the data that supports what you already think than the data that should give you pause.

The Diving-Specific Picture

No published research to date has examined heuristics and biases specifically in recreational or technical SCUBA populations, which is a gap worth naming. But the mechanisms are not domain-specific; the human cognitive architecture that generates availability bias in an emergency department triage nurse generates it in a dive leader assessing a site briefing. The conditions that make heuristics more prone to error — novel or rare events, poor feedback loops, high emotional investment, social pressure, time pressure, fatigue — are consistently present in diving.

Consider the experienced diver who has completed the same dive profile dozens of times without incident. Their intuition about that profile is probably well-calibrated, but it has been calibrated against the dives that completed successfully. The near-misses and the subtle errors caught by luck rather than skill don't feed back into the system in the same way - these are often described as 'normal'. The result is a confidence level that reflects experience without fully reflecting risk. Overconfidence isn't arrogance in this context; it's a predictable output of a social and cognitive learning system that only receives partial feedback because structured debriefs, reflections, and the sharing of knowledge in the diving domain are not the norm.

The "this looks like last time" heuristic (representativeness) is particularly consequential in technical diving, where gas planning, decompression obligations, and team composition create compounding dependencies. A dive that superficially resembles a previous one can differ in ways that matter: slightly different depths, slightly different exertion rates, a buddy who is newer or less fit, a drysuit that wasn't quite properly maintained. Pattern recognition speeds decisions, but it also compresses the detail that makes two situations genuinely distinct. The case of the four divers spending 5.5 hours on a dive on the IJN Sata instead of 2 hours because they became entrapped in the wreck is a good example of this.

What Can Actually Help

The research from medicine and construction is reasonably consistent about what works for debiasing, and the mechanisms translate to diving. Structured pre-dive checklists intentionally slow down the heuristic thinking and create deliberate checkpoints where 'System 2', the slower and more analytical way of thinking, can be engaged. Simulation and scenario training help because they create the kind of feedback-rich environment that calibrates intuition properly: you practice the situation, observe the outcome, and update. Explicit education about common biases appears to reduce their influence not by eliminating intuitive processing but by creating a metacognitive layer, an awareness that your confidence is not the same thing as your accuracy.

Perhaps most importantly, team dynamics and psychological safety matter. In settings where briefings are genuinely two-way, where divers can surface a concern without social cost, where turning a dive is treated as a competent decision rather than a failure, the social conditions exist for biases to be caught before they compound into incidents. Many of the conditions that make biases dangerous like overconfidence going unchallenged or anchoring on a plan nobody wants to revise, are social phenomena as much as cognitive ones.

Understanding heuristics and biases isn't about distrusting your instincts. It's about knowing which situations your instincts are well-calibrated for, and which ones deserve a slower, more deliberate look before you commit. In diving, as in surgery and firefighting, the goal isn't to eliminate fast thinking. It's to know when to pause it.