Why does nothing change? Why do the same failures keep happening?

Between 2013 and 2022, there were 241 confirmed rebreather fatalities (data presented at RF4.0). That is roughly 24 per year, every year, for a decade. Over the same period the rebreather market grew significantly, training numbers increased, equipment became more sophisticated and reliable, and the diving community continued to invest time, money, and energy into safety improvements.

Twenty-four deaths per year. For the ‘open circuit’ sports diving space, it is around 200 fatalities per year.

Unfortunately, we don’t have an accurate numerator or nor an accurate denominator. We don’t know the population of those exposed. We don’t know how many people die. We also don’t know how many people get harmed physically or psychologically during or after diving, and then give up because “they’ve had enough of this ****”

We have many unknowns.

I have recently submitted a paper to a journal that attempts to explain why that number has not moved, and more importantly, why it is unlikely to move until the community recognises and does something about the structural factors that influence or drive unwanted outcomes in diving. The paper is grounded in the scientific literature, not just something ‘Gareth made up.’ This blog is a summary of the arguments made in that paper. I want to make the argument as accessible as possible, because the people who most need to hear it are not academics. They are instructors, dive leaders, training agency staff, and anyone in this community who has influence over how we train, how we investigate, and how we respond when things go wrong.

The short version: for rational reasons, diving safety has been looking in the wrong place, and the data has been telling us this for a long time.

The Dominant ‘Safety’ Model

The way the diving industry currently approaches safety rests on three assumptions:

Accidents are primarily caused by physiological or technical failures.

The solution is better equipment, stricter protocols, and improved individual skill.

Safety is adequately measured by how many people die and how many DCS cases get treated – ‘unsafeties’ are counted.

None of those assumptions is technically wrong. Proficient in-water skills are important. Equipment reliability does matter. DCS is a real hazard worth researching and managing (as you can see from the recent DAN study.)

The problem is what this ‘safety’ model excludes. It excludes the team dynamics, the communication failures, the pressure to continue when the signs said stop, the instructor who taught the standard but didn't model it in their own diving, the training agency that collected incident data but never analysed it for systemic patterns, and the diver who didn't report the near-miss because they correctly judged that nothing useful would happen if they did.

All of those things appear in accident after accident when you look at the full picture. They almost never appear in the post-event discussion. Instead, we focus on what the diver at the sharp end did or didn't do, the proximal cause, the last action in the chain, and that is where we stop.

There are rational reasons for this behaviour

It is cognitively easy,

It is socially safe, and

It is legally convenient.

Unfortunately, if we want to learn and improve, the research shows that this approach is almost entirely useless for preventing the next similar event.

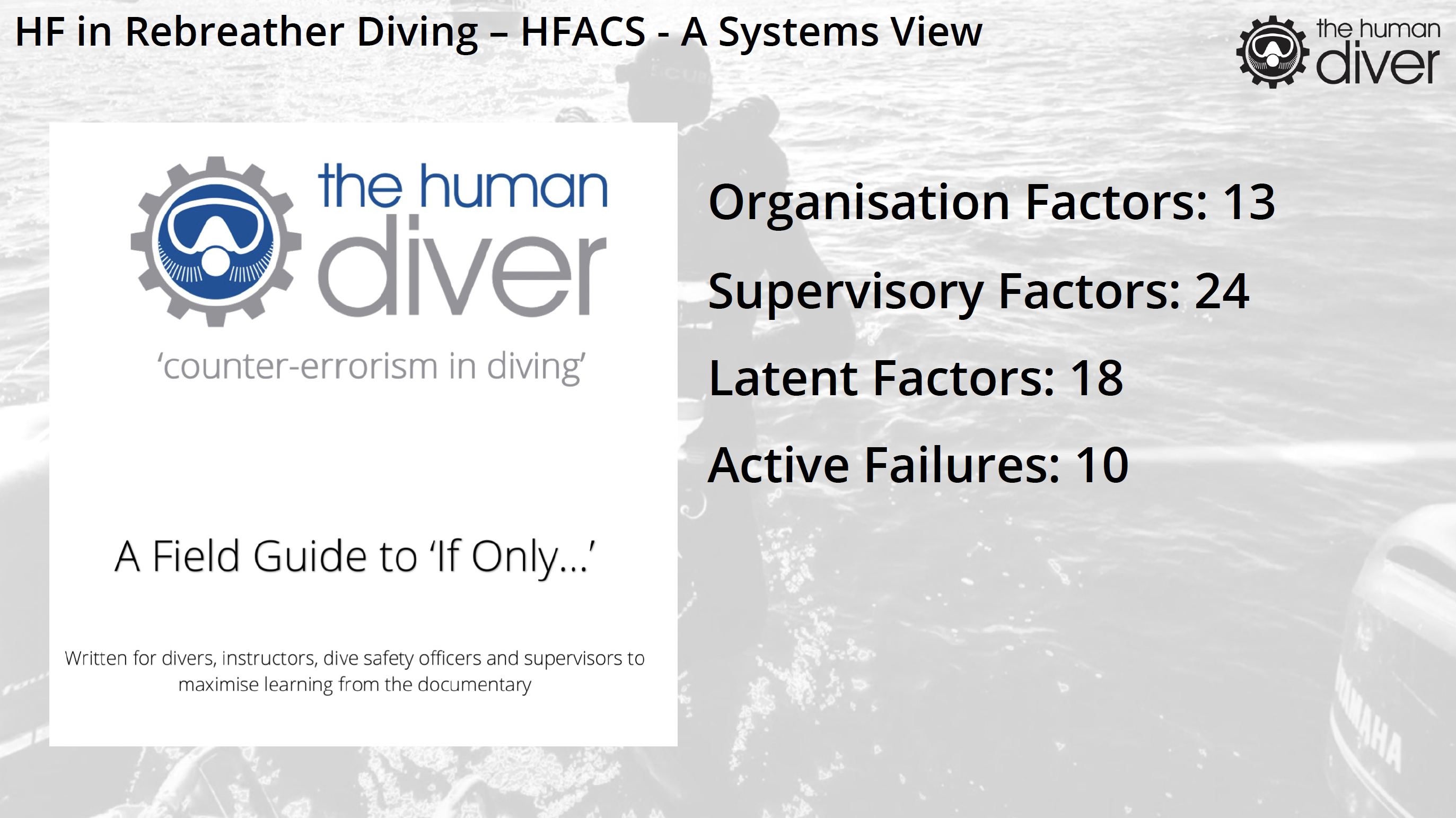

What the Data Actually Shows About Cause

The If Only… documentary examines the circumstances and behaviours leading to the death of Brian Bugge who was using a CCR while on diver training programme. What we look for shapes our perspective of how an event happens. Look for human error, you’ll find it. Look for equipment failure, you will find it. The analysis I undertook was through the lens of human factors and system safety and I used the Human Factors Analysis and Classification System (HFACS) taxonomy to help structure actions, behaviours, and outcomes. When the contributing factors were identified and categorised, the distribution looked like this:

Active factors are those actions/behaviours that the diver did immediately before the outcome. This is what nearly all post-event diving discussion focus on. Despite them only accounting for fewer than one in six of the total contributing factors in this case. The other five-sixths were upstream, in the training system, in the supervisory culture, in organisational incentives, and in the social norms that made certain decisions feel normal even when they were drifting away from safe practice.

This weighting towards higher level factors was not a surprise to me, because it is consistent with what safety science has found across aviation, healthcare, oil and gas, and nuclear power. Accidents in complex systems are not the product of one person making one bad decision; those individuals just put the icing on the cake of latent conditions. Those accidents are the product of conditions, many of them invisible, many of them present for months or years before the triggering event, that converge in a way that makes harmful outcomes emerge. The Linnea Mills case is another example of this.

Understanding those conditions is what effective safety work looks like. Unfortunately, sports diving currently does very little of it. As a caveat, if the industry does do this, it doesn’t talk about it. From personal experience (and my research evidenced this), there are conversations, but they are not documented for fear of discovery in the event of a legal case being raised.

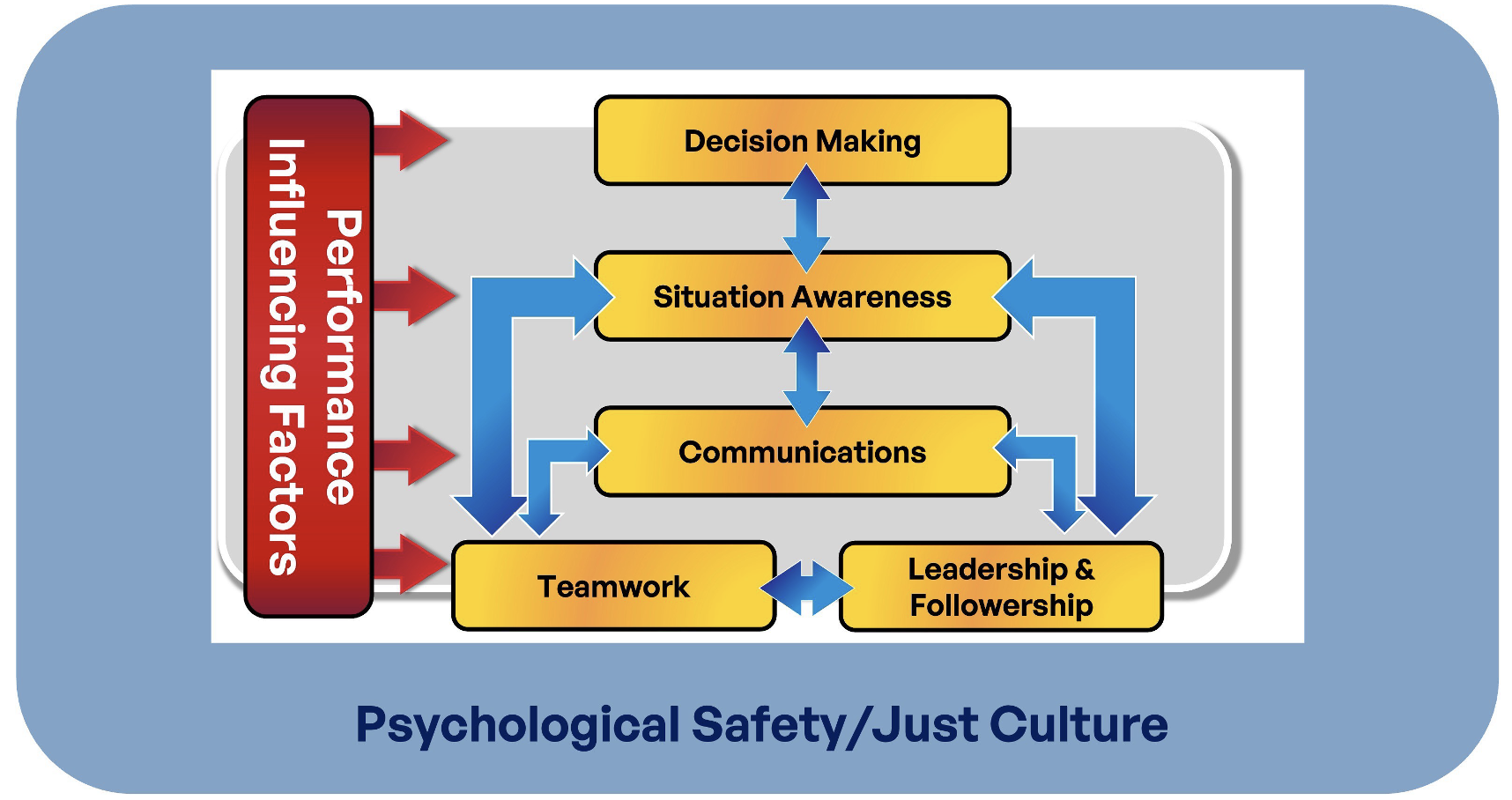

The Non-Technical Skills Gap

What language do we use to communicate good, bad, or indifferent diver or instructor performance? Do we focus on technical proficiency or something else?

There is something missing from every major recreational and technical diving certification curriculum. No major training agency has an explicitly embedded programme for human factors (HF) or non-technical skills (NTS); the decision-making, situation awareness, communication, teamwork, and leadership capabilities that the evidence consistently identifies as critical contributory factors to adverse outcomes when they are missing or ineffective. While some organisations have a module that mentions them, and some have a few slides that define them, none has an embedded, assessed, developed programme.

This is not a small gap. The DAN dataset covering nearly 1,000 diving fatalities found that the dominant triggering factors were not physiological or equipment failures. They were gas mismanagement, buddy separation, poor situation awareness, and the absence of a functioning team response when conditions deteriorated. These are human performance failures. They occur in novice divers, technically competent divers, in experienced divers, and in divers who know the rules and have followed them before. What they have in common is not necessarily ignorance of the standard, it is the absence of trained, practised, and debriefed non-technical capability to perform under pressure.

Think about the last dive you did with a team. Did you agree, explicitly and out loud, on what would make you turn the dive? Not what the standard says. What your team agreed on, for that specific dive, on that day, in those conditions? Did you discuss what would happen if one of you signalled a problem and the other didn't see it? Did you talk about how you'd make the call if the conditions on the surface looked different from what you briefed?

Most teams don't. It isn’t because they're careless, but because the norm, the thing that experienced, respected divers around them model, is “Why do we need to run a dive brief? It’s only a dive, what could possibly go wrong?” That level of explicit pre-dive coordination can feel excessive when you've dived with someone fifty times before. It feels like you're treating your experienced buddy like a student. The social signal in most technical diving communities is that the more experienced you are, the less you need to spell things out.

This is one of the most dangerous assumptions in diving, and it is completely invisible because it almost always works.

Most of the time, the shared mental model really is enough. Most of the time, the signals are read correctly. Most of the time, the unspoken turnaround decision-points match across team members. And if we don’t receive the communication or message first time, we can often make a good guess, based on the context, until we find out the real message.

And every time it works, the assumption gets reinforced. Until the time it doesn't.

The evidence from aviation and healthcare on this is clear. Explicit coordination, stating the obvious, agreeing the plan, naming the decision points before you need them – they all reduce the frequency of team failures. If we assume we have a shared mental model, we will find out at the worst possible moment, that the understanding was not shared at all!

There is another dimension to the NTS gap that shows up specifically in peer-equivalent recreational diving teams, and we need to call that out. Most of the crew resource management (CRM) research that gets ported into diving was developed for hierarchical teams, cockpit crews, surgical teams, where one of the problems is a junior member who won't challenge a senior one when they see that something isn’t quite right and needs clarification or a challenge needs to happen. The solution in those contexts is assertion training; teaching people to speak up to authority, and for those in authority to be on the lookout for these challenges, to listen up.

That isn't the primary problem in most recreational and technical diving teams. When you're diving with an equal, someone you trust and respect, the pressure not to speak up comes not from authority but from not wanting to seem uncertain, not wanting to slow the team down, not wanting to be the one who questions a plan that everyone else seems comfortable with. The mechanism is different. And the intervention is different too. It is not about teaching people to challenge authority. It is about building team norms, where stating the obvious, asking for confirmation, and identifying uncertainty, are all treated as signs of competence rather than weakness.

None of this is in any agency's standard curriculum. None of it is assessed. And because it is not assessed, it is not practised, and because it is not practised, it is not available when conditions deteriorate and the team needs it most.

The fatality data does not show divers dying because of poor designed equipment or bad luck. It shows them dying because of failures in how divers communicate, establish and maintain psychological safety, how divers succumb to cognitive biases, and how the ‘investigations’ have never identified because they aren’t looking for them. That is the non-technical skills gap. And it has been there for many years.

The Incident Learning Gap

NTS is one side of the coin; how we prevent adverse from occurring, and what do we do to improve performance. However, there is another structural problem, and in some ways, it is more fundamental to reducing adverse events.

The diving community does not have a functioning incident learning system. That might be considered contentious, especially given that there are organisations who manage incident reporting systems. So, let me explain.

A learning system requires five things:

near-miss reporting infrastructure & technology that people actually use,

investigation methods that look 'up and out' from the individual to the system conditions that shaped what happened,

a just culture that provides psychological safety for transparent reporting,

a shared taxonomy that makes pattern detection possible across the population of events, and

a feedback mechanism that takes the lessons identified and feeds them back in a timely manner, across the system, to reduce the likelihood of similar situations from developing, and help build capacity in divers and diving instructors.

Diving has fragments of all of these. Divers Alert Network, the BSAC, and DOSA have incident reporting systems. Some agencies collect safety data. Some communities talk openly about near-misses in informal settings. But none of it is assembled into anything that functions as an incident learning system.

The reason people don't report to the organisational channel is not that they don't want to share. In my MSc research across 676 divers and four focus groups, the data was clear: divers actively share stories with their peers, on social media, and in informal settings. What they withhold is formal reports to organisations, and they withhold them in direct proportion to their belief that organisations won't do anything useful with the information. The more a diver believes their agency doesn't learn from incidents, the more specifically they avoid the organisational channel. They don't avoid the other channels. Just that one.

This is calibrated, rational behaviour. The system is broken, and the people within it know it is broken, and they are adapting sensibly to that reality. Campaigns telling divers to report more will not materially change this. The organisational infrastructure that receives reports has to change first. Ironically, those who contributed to the research who had some form of structured HF training recognised this gap more than those who hadn’t, and didn’t report as much as you’d think.

The fear hierarchy makes this even clearer. In my research, divers rated their fear of organisational consequences (legal, professional, and reputational) much higher than being judged by their peers. The barrier to reporting is not social, it is institutional, and it exists primarily in the training agency and legal environments within which professional divers operate.

Another potentially counterintuitive perspective is that instructors make this problem of learning from adverse events worse, not better. The research has showed that instructors exhibit significantly more ‘distancing-through-differencing’ when they read incident accounts than any other group; they are more likely to look for reasons why the incident couldn't or doesn't apply to them. They are more likely to conclude "that wouldn't happen to me" than a ‘fun diver’ reading the same account. This means that those in the diving community who you’d think would be most responsible (and effective) for transmitting safety learning to the next generation of divers are the most motivated, psychologically, to distance themselves from adverse events and the conditions associated with them.

This isn’t a personal failing; it is a totally predictable response to the social and professional environment that instructors operate in. There are much wider consequences to this behaviour; when instructors filter their learning, the filtering propagates forward to their students and clients. As a consequence, we miss so many opportunities for learning, inside and outside of that agencies’ training activities. Again, something I have direct experience of when I was involved in a diver training agency.

The Skydiving Comparison

In 1961, skydiving in the US had a fatality rate of approximately 11 per 100,000 jumps. In 2024, it was 0.23. That is a 96% reduction across six decades. They don’t have a government regulator. They do operate in a fragmented commercial training market. They are operating as a discretionary leisure activity. Sound familiar?

The mechanism for change wasn’t better parachutes; it was a learning system. It was the application of a stable classification of events applied annually to each report, producing longitudinal data capable of detecting anomalies and triggering institutional responses. When a specific type of fatality pattern spiked in 2022, the data was there to see it, and the authority to act on it was exercised.

Those in both diving and sports parachuting might say there are critical differences, especially when it comes to control on the dropzone and parachuting operations because the dropzone safety team can stop operations more easily than someone can in diving. However, that is just one element of a system that has safer operations as a core value.

Fundamentally, the diving sector does not know whether it is getting safer. It does not have a current longitudinal fatality index. It cannot detect anomalies because it cannot see patterns. The DAN annual fatality rate from the early 2000s, 0.45 per 100,000 dives, has not been systematically updated (for many reasons).

We cannot improve what we cannot measure. I would argue that the diving industry is choosing, collectively, not to build the infrastructure that would let us measure it.

Three Things That Would Actually Change This

The paper I have submitted identifies three categories of change that are both necessary and achievable.

The first is a shared language for performance. That means NTS frameworks — decision-making, situational awareness, communication, teamwork, leadership — operationalised for the diving context. It means the frameworks behind the Learning from Emergent Outcomes (LFEO) course (LEODSI and PETTEOT), or equivalent frameworks, become part of how diving incidents are discussed and investigated, not optional tools for those who've done a human factors course. There are now 6 blogs on The Human Diver site that take this approach. Having a common vocabulary means that when a diver tells a story about something that went wrong, the community has the vocabulary to explore the conditions and context around the event rather than stopping at individual blame.

The second is learning infrastructure. This would involve a shared cross-agency adverse event taxonomy, a near-miss reporting pathway with real legal protection, a longitudinal index, investigation methods that look beyond the trigger event and feedback loops that return learning to training curricula. None of this requires a regulator. It requires the manufacturers, training agencies, and safety organisations to decide, collectively, that they want to know what is actually happening and why, and support it. Commercial drivers should not influence this decision. A small fee ($1-$2) on top of every certification would provide massive funding for such an independent capability.

The third is an environment that is socially and culturally supportive of preventing and discussing adverse events. There are two parts to that: (1) psychological safety means an environment where it is safe to say I made a mistake, I saw something concerning, I didn't feel right on that dive and I'm not sure why, and (2) a just culture means events are looked at with curiosity first, not blame and judgment, recognising that divers and instructors don’t go on a dive to get it wrong. Unfortunately, those social and cultural environments do not currently exist at the organisational level in diving, and it will not exist until the leaders of training agencies, dive centres, and safety organisations model the disclosure they are asking everyone else to provide. A senior instructor who says here is a mistake I made and here is what it revealed, creates a very different culture from one who has never said anything of the sort.

What This Means for You

If you are an instructor, the most immediate application is this: what do you do when a dive goes badly? What do you do when a student makes a significant error? What do you do when you make a significant error? If the answer involves writing as little as possible for legal reasons, your agency's culture is part of the problem described in this paper. If the answer involves a structured, curious, blame-free conversation about what was going on in the system at the time, you are part of the solution.

If you are a dive leader or team diver, the most immediate application is your pre-dive briefing. Not whether you have one, you probably do, but whether it externalises the mental model or assumes it. Does your team agree on turnaround criteria, or do you assume you all have the same threshold? Do you agree on what will make you abort the dive, or do you rely on each other to read the signals? Stated is safer than assumed. Always.

If you are in any position of influence over training standards, agency policy, or incident reporting, and if you are reading this, you may well be, the question is simpler and harder. Are you willing to build what is needed? Or are you waiting for someone else to go first?

The tools are there. The evidence is there. The argument has been made.

If you want to build the skills described in this article — NTS, structured debriefing, systems thinking applied to diving — start with HFiD: Essentials. If you want to understand how to investigate and learn from adverse events in your own diving or organisation, Learning from Emergent Outcomes is the programme designed for that.